One of the fastest developing forms of technology is artificial intelligence (AI). AI is controversial because while it has unveiled numerous benefits, many fear its role in humanity’s technological future.

AI is any digital tool that is created to mimic or recreate human intelligence. This can include any computer program that can perform tasks such as reasoning, discovering meaning and learning from the past.

AI and its recent developments have reaped many benefits that have altered our society. It has streamlined important processes within corporations, schools and hospitals, enhanced our research and data analysis capabilities and reduced the potential of human error within many industries. However, as AI continues to expand in its abilities, one cannot ignore the dangers it may pose.

There are many risks associated with AI technology, but for college students and young adults, its threat to privacy and ability to cause job displacement are sounding alarms and warranting a course of action for the future.

In order to protect people from the dangers associated with the future of AI, governments should consider legislation that will secure people’s rights against it.

As many users of social media may have noticed, AI is growing in its ability to perform tasks that entertain us. Based on current trends, many people scrolling through social media will stumble upon videos that showcase AI software replicating the voices of famous artists in order to make it sound like they are singing songs they have never sung in real life.

While it is fun to hear Drake sing a Hannah Montana song on platforms such as TikTok, the capabilities of AI that undertone these videos can be unsettling to think about, especially when it comes to those who are not using AI in good fun.

Left in the hands of scammers, AI can become a weapon. In March 2023, the Federal Trade Commission released an alert that warned people of phone calls that could sound like the voice of a loved one, but in reality, could be an AI induced ploy for scammers to steal money.

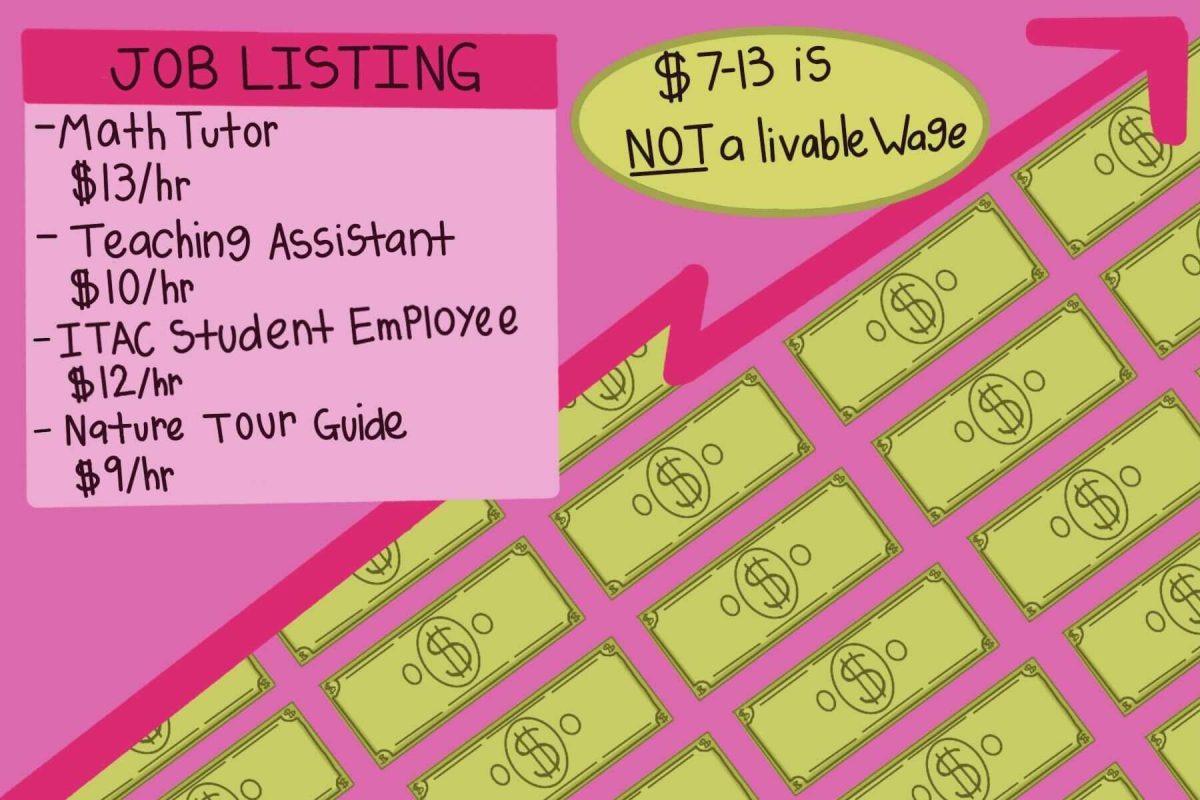

Within the discussions about the future of AI, another growing topic is the propensity for new AI to displace jobs.

For students at Texas State, the hovering presence and potential of AI in the future job market can be unnerving. Many predict the future of new automation will affect a wide range of careers including lawyers, financial specialists, accountants, medical workers and many other professional positions.

Because of its fervent threats to security and privacy, on top of its threat to the future job market, it is reasonable to wonder if AI is reaching a point that calls for regulation, and there are debates about who should do it. According to the American Bar Association, the regulatory framework su

rrounding artificial intelligence is still in its early stages.

Compared to the efforts of the European Union, who just took steps towards passing an AI Act that will regulate AI in terms of its threat to privacy, the federal government of the U.S. has made less movement.

Many professionals representing international countries have expressed the need for the U.S. to join the efforts towards controlling the power of AI, however, the U.S. has received push back from large tech companies.

Those in favor of government regulations for AI argue that it is dangerous to allow large technology companies and other powerful corporations and businesses to set the agenda.

The guidelines that currently exist are principle based and not technology based. This means that agencies such as the Federal Trade Commission focus on principles, such as the right to privacy, without actually targeting or focusing on AI itself. Some lawyers and advocates argue that legislation might need to become more technology specific instead of neutral.

Technology specific laws would be closer in similarity to the framework of the European Union’s approach to an AI act. The regulatory outline of their AI Act legislates on the specific technology so that it can provide clear rules regarding the uses of AI.

Another approach that follows the European Union’s process is to begin legislation by focusing on the more dangerous components of AI. As explained by lawyers in the APA journal’s podcast, The European Union’s framework for AI legislation focuses on the high risk aspects of AI, such as data and privacy protection.

Following this approach could be beneficial to the U.S. because focusing on the high risk of AI is advantageous in that it does not squash the benefits of AI by overregulating it.

However, in the U.S., passing regulatory legislation on a federal level is difficult. Although there is no national regulation of AI, there are many agencies that create guidelines for companies to follow and lawmakers to use as a starting point.

The progression of technology can be amazing. It changes our world constantly and oftentimes for the better. However, in order to prevent technology from overshadowing the rights of people, lawmakers must contemplate legislation that will regulate its dangers.

-Faith Fabian is an English sophomore

The University Star welcomes Letters to the Editor from its readers. All submissions are reviewed and considered by the Editor-in-Chief and Opinion Editor for publication. Not all letters are guaranteed for publication.